Google’s Quiet Escape from the GPU Trap

Everyone’s watching the Nvidia supply chain. Google built a different road entirely. The numbers suggest it matters more than the market thinks.

Every major cloud provider is spending like the future depends on it. And in the public imagination, they’re all buying from the same supplier: Amazon, Microsoft, Oracle, billions flowing to Nvidia for GPUs that pull 700 watts per chip, throw off brutal heat, and arrive on someone else’s timeline.

Google is spending too. But when I traced where Google’s AI inference actually runs, I kept hitting a detail that doesn’t fit the standard GPU arms race storyline: a meaningful share of Google’s AI workload doesn’t touch Nvidia hardware at all. It runs on chips Google designed itself. That’s been true for years. What’s changed is that the gap between Google’s approach and everyone else’s is starting to show up in the constraint that actually matters for AI at scale: power. Not “do you have enough chips?” but “can you run the models you already have without blowing up your energy bill, your cooling footprint, and your grid interconnect queue?”

Google has been building custom AI silicon for roughly a decade. Its sixth generation went live in 2024. And the efficiency advantage is getting harder for the market to ignore.

The Power Wall

The most honest way to understand the AI boom is to treat it like an industrial buildout, not a software cycle.

A single ChatGPT-style query consumes about 0.0029 kWh of electricity, versus roughly 0.0003 kWh for a traditional Google search, according to a comparison published by Kanoppi. That’s roughly a 10x jump in power for what, to a user, feels like the same action: type words, get an answer. And that number covers only inference, the act of running a trained model to produce outputs. Training is a different animal. But inference is the part that scales with real-world adoption. If AI becomes the default interface for search, productivity software, customer service, code generation, and media creation, inference becomes the metronome that sets the pace of power demand.

Now zoom out from a single query to the grid. BloombergNEF projects that U.S. data center power demand could reach 106 GW by 2035. The exact trajectory will be debated, but the direction is the point: data centers are becoming a first-order load on the electrical system, and AI is the accelerant.

Here’s where the hype hits physics. Hyperscalers can deploy software updates in days. They can raise capital in hours. But electrical infrastructure doesn’t scale on those timelines. Substations, transmission upgrades, gas turbines, new renewables, interconnection studies, cooling systems, backup power, and permitting all move at the speed of heavy industry and local politics. Every large cloud provider is running into the same bind: more AI demand means more compute, more compute means more power and cooling, more power means grid upgrades with long lead times, and those lead times don’t care about quarterly revenue targets.

Google’s leadership has been unusually direct about this. Sundar Pichai told investors that AI capacity is what “keeps us up at night.” I don’t read that as corporate melodrama. I read it as a CEO naming the real limiting reagent of the next decade.

If power is the wall, the strategic question shifts. It’s no longer “who has the best model?” It’s “who can run inference most cheaply per watt, per dollar of capex, and per unit of cooling?” That’s where Google’s custom silicon stops being a nerdy footnote and starts looking like a structural advantage.

A Decade of Custom Silicon

Google didn’t wake up in 2023 and decide it needed an alternative to Nvidia. The TPU story starts much earlier, before “generative AI” became a boardroom phrase.

Google’s own chronology of its TPU program traces the project back to around 2014, with TPU v1 deployed in 2015 for inference and scaled internally to over 100,000 units. The motivation was pragmatic: internal AI workloads, things like speech recognition and the early AlphaGo experiments, were growing fast enough that buying general-purpose compute was starting to look like the wrong answer.

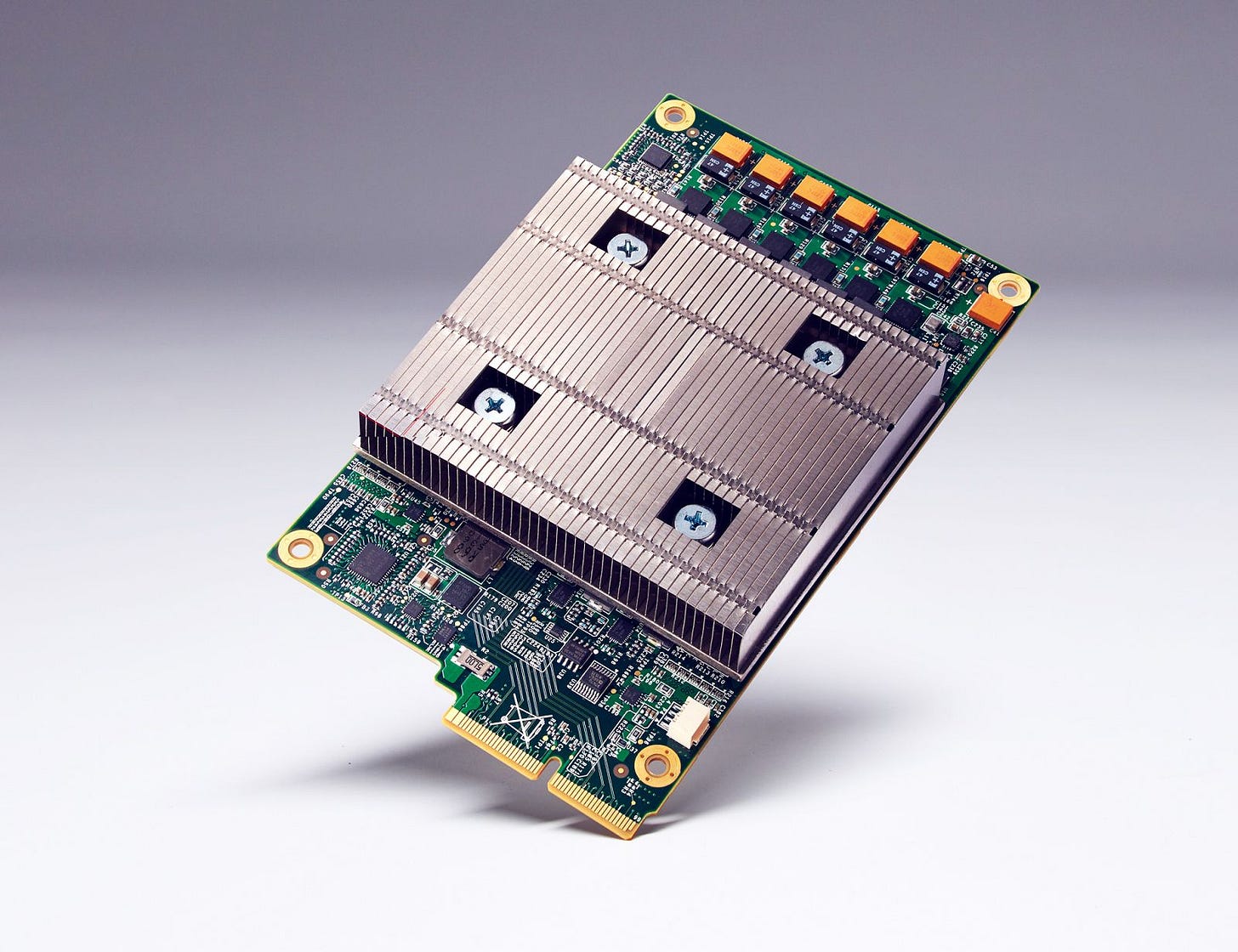

From there, the progression reads like a company turning a one-off optimization into an industrial platform. TPU v2 expanded into training and introduced the idea of interconnected TPU “pods.” TPU v3 pushed further with liquid cooling to manage thermal density. TPU v4 added more sophisticated networking, including optical circuit switching. TPU v5e emphasized efficiency. And then Trillium, the sixth generation that went live in 2024, is where the numbers get loud.

Google says Trillium delivers 4.7x more compute per chip than TPU v5e and is 67% more energy efficient than the prior generation. Even if you treat vendor claims with the skepticism they deserve, the direction is unmistakable: Google is iterating its AI silicon like a product line, not an experiment.

The other strategic inflection point was commercialization. Google launched Cloud TPUs to external customers in 2018, turning what could have remained a private advantage into a platform offering. And then there’s the adoption statistic that caught my attention most: Google says Cloud TPUs are now used by 90% of generative AI unicorns. If you believe the most valuable AI startups are unusually sensitive to compute cost and performance, that figure reads like revealed preference. They’re not buying a narrative. They’re buying what works for their unit economics.

The key distinction here is also the simplest. TPUs are purpose-built for AI training and inference. They’re not designed to be great at graphics, simulation, or general compute. That specialization isn’t a limitation. It’s the entire point. Google made an early bet that AI would become an infrastructure workload with repeatable patterns, and if that bet is right, specialized chips win because they can do more useful work per watt and per dollar.

The question is why this matters competitively right now, when Nvidia still dominates the headlines and the capex budgets. To answer that, you have to look at the bind everyone else is in.

The GPU Dependency Problem

The cloud market is still led by AWS and Microsoft. In Q4 2025, Synergy Research data put global cloud market share at roughly AWS 30%, Azure 22%, and Google Cloud 13% to 14%, with Oracle smaller at around 5% to 7%.

Those shares matter, but they can also mislead. Market share is a snapshot of accumulated scale. The more interesting question is what happens at the margin when the product being sold is no longer “compute and storage” but “AI capacity under power constraints.”

AWS and Microsoft aren’t ignoring the problem. They’re building custom silicon too: AWS has Trainium, Microsoft has Maia. But they’re not where Google is on the learning curve. Google is already on its sixth TPU generation. It’s had years to tune compilers, networking, cooling, software frameworks, and the operational playbook of running fleets of specialized accelerators. The moat isn’t just the chip. It’s the integration cost of making the chip useful at hyperscale.

Power is where the dependency becomes a bill you can’t negotiate. One industry comparison puts TPUs at roughly 300W versus Nvidia’s H100 at around 700W. It’s not an official spec-sheet comparison and shouldn’t be treated as one. But it’s directionally consistent with what you’d expect from a purpose-built inference accelerator versus a more general GPU architecture pushed to extremes. I kept coming back to a simple mental model: if you’re running millions or billions of inferences per day, watts become destiny. A few hundred watts of difference per chip compounds into a different cooling design, a different rack density, a different power delivery plan, and ultimately a different ability to scale.

Supply chain risk is the other dimension. If multiple hyperscalers are bidding for the same GPU supply, they’re competing not just on price but on allocation. In a world where AI demand is spiking faster than manufacturing capacity can expand, depending on a single upstream supplier is a strategic vulnerability.

The most concrete signal I found that this isn’t academic came from Anthropic. The company signed a deal to expand to 1 million TPUs and expects to have over 1 GW of processing power by 2026. Strip away the press release language and just sit with the physicality of that number: a gigawatt is power-plant scale. It’s industrial infrastructure.

Google Cloud CEO Thomas Kurian framed Anthropic’s decision explicitly as an efficiency play in the partnership announcement:

“Anthropic’s choice to significantly expand its usage of TPUs reflects the strong price-performance and efficiency”

That’s an unusually direct claim. TPUs aren’t just “available.” They’re compelling on price-performance and efficiency, which is exactly what you’d optimize for if you were staring at inference costs and power limits at scale.

And yet, Nvidia’s counter-narrative remains powerful. Jensen Huang has argued repeatedly that GPUs remain ahead, and the market still treats Nvidia as the picks-and-shovels monopoly of AI. I don’t think you can resolve this tension cleanly, because it’s workload-dependent. GPUs are versatile. They’re a known quantity. They have an enormous software ecosystem. For many workloads, especially where flexibility matters, GPUs will remain the default. But inference at massive scale is a narrow, repetitive, cost-sensitive workload that looks increasingly like infrastructure, and infrastructure rewards specialization.

Both truths can coexist: GPUs can be the best general answer while specialized chips become the best scaled answer. The interesting question is whether the market is pricing that second reality correctly.

The Numbers That Matter

If the TPU strategy is real, it should show up somewhere measurable. Not in a product demo. In revenue growth, customer adoption, and the willingness to spend.

Alphabet’s Q4 and full-year 2025 results provide the cleanest snapshot. In its earnings release, Alphabet reported Google Cloud Q4 2025 revenue of $17.7 billion, up 48% year over year, with the business ending 2025 at an annual run rate of over $70 billion. Pichai put a fine point on it:

“It was a tremendous quarter… Google Cloud ended 2025 at an annual run rate of over $70 billion, representing a wide breadth of customers, driven by demand for AI products”

That growth rate matters because it’s happening while Google is still the third-largest cloud provider by share. If AWS and Azure are growing in the 20% to 30% range, and Google Cloud is growing at 48%, something is pulling demand toward Google at the margin. It might be pricing. It might be product packaging. It might be enterprise sales execution. But it’s difficult to ignore that Google’s AI stack includes a differentiator most competitors can’t match quickly: a mature, in-house accelerator platform that’s already several generations deep.

Alphabet’s broader financial position gives it the ability to press the advantage. For FY2025, Alphabet reported $402.8 billion in revenue and $129 billion in operating income, a 32% operating margin, with $73.3 billion in free cash flow, all detailed in the same earnings release.

That’s the strength side of the ledger. Now the risk side.

Alphabet guided $175 to $185 billion in capex for 2026. That number is more than the GDP of most countries. The market’s reaction has been to treat it as a margin threat rather than a moat investment. Alphabet stock has pulled back roughly 10% to 12% from its peaks amid capex concerns. Investors see spending and worry about returns.

The tension here is the one that matters most to me: if TPUs are such an efficiency advantage, why does Google need to spend up to $185 billion in a year? I don’t think the answer is that the efficiency story is false. I think the answer is that efficiency changes the slope, not the destination. If AI inference demand is compounding, even a dramatically better chip still requires an enormous buildout. Better watts-per-query doesn’t eliminate the power wall. It lets you climb it faster, with less cost, and with less heat.

But whether this capex translates into proportional revenue growth and improving margins, or whether it becomes a treadmill where every incremental dollar of cloud revenue requires another dollar of infrastructure, remains genuinely unclear. The honest way to watch is to track whether Google Cloud can sustain outsize growth while expanding operating leverage over time.

What the Market Might Be Missing

Google has something close to end-to-end vertical integration in AI: custom silicon, a cloud platform that sells that silicon as a service, and consumer distribution through products like Search and YouTube that generate enormous inference demand internally. That internal demand creates a feedback loop. Google can justify building hardware at scale, which improves its economics, which makes its cloud offering more competitive, which attracts more external demand, which justifies more scale.

Kurian has been explicit about this. In a Fortune interview, he described Google Cloud’s position as the fastest-growing major cloud provider, attributing it to a complete “stack” of technology. AWS and Azure have huge stacks too, but Google’s TPU maturity is unusually deep, and the energy constraint makes that depth more valuable over time.

I also think the market is still catching up to a second-order shift: the industry’s spend mix will likely move from training-heavy to inference-heavy. Training is episodic. You train a frontier model, then you serve it. Inference is the annuity. It’s the part that turns AI from a research project into a utility. Specialized chips tend to look better in inference economics, particularly at scale. One analyst projection suggests that GPU market share could shift from roughly 95/5 to 70/30 as custom ASICs scale. I treat this as a directional hypothesis: as inference becomes the dominant workload, specialized silicon takes share.

But there are important limitations. The same analysis notes that TPU advantages can be scale-dependent, excelling in clusters of 1,000+ chips with optical circuit switching, and being less advantageous at smaller deployments. That matters because most enterprises don’t start with thousand-chip clusters. They start with pilots, small deployments, and uncertain demand. GPUs, with their flexibility and broad software compatibility, remain the safer default in that phase.

This is why Jensen Huang’s position and Google Cloud’s 48% growth can coexist without either being wrong. GPUs can remain the best answer for flexible, general workloads, while TPUs win on the narrow but gigantic corridor of scaled inference and training inside tightly integrated systems.

The unresolved uncertainty is magnitude. You’ll hear people throw around “10x cheaper” claims in casual conversation. I don’t think that’s the right baseline. The more plausible range tends to be 2x to 4x cost advantage depending on workload and scale, which is still enormous. Two to four times better unit economics, compounded across billions of inferences, is the difference between a profitable AI product and one that bleeds cash indefinitely.

The other open question is competitive convergence. If AWS Trainium and Azure Maia achieve comparable efficiency and software maturity within a couple of years, Google’s advantage narrows. But the key point is that Google isn’t starting that race today. It started around 2014.

What It Means

After spending time in the TPU details, I came away with a view that’s less about chips and more about constraints.

Google has built something genuinely difficult to replicate quickly: a decade-long custom silicon program integrated into a cloud platform that’s currently growing faster than its larger rivals, tied into consumer distribution that can generate the largest inference volumes on earth. That combination is rare. Most companies have one or two of those pieces. Google has all of them.

The power constraint is what turns this from an engineering curiosity into a competitive story. If AI queries really are an order of magnitude more energy-intensive than traditional search, and if U.S. data center demand really is bending toward triple-digit gigawatts by the mid-2030s, then the winners aren’t just the companies with the best models. They’re the companies that can run models cheaply and reliably when the grid becomes the bottleneck. Controlling your own inference silicon reduces supply chain exposure, improves cost predictability, and lets you optimize the entire stack around the real limit: watts and cooling.

Who benefits is straightforward: platforms that can run inference at scale on their own accelerators. Google is the clearest example today. AWS and Microsoft may get there, but they’re chasing an incumbent in specialized AI silicon, not inventing the category.

Who gets pressured is equally straightforward: hyperscalers that remain dependent on Nvidia for the majority of AI compute face compounding cost and supply chain disadvantages as inference volumes grow. Nvidia itself can still dominate training and still be a spectacular business while losing share in the inference layer over time. That’s how infrastructure markets mature.

What I’m watching now is less about product launches and more about tripwires. If Google Cloud’s growth rate decelerates into the 20% to 30% band for two consecutive quarters, the structural advantage story weakens. If AWS Trainium or Azure Maia demonstrate comparable efficiency at production scale with real customer adoption, the gap narrows. If Google Cloud operating margins decline even as revenue rises, the capex may be destroying value rather than building a moat. And if a major AI company reverses course and shifts workloads away from TPUs back to GPUs, it would be a sharp signal that the economics weren’t as durable as they looked.

Nobody knows yet whether Alphabet’s $175 to $185 billion capex plan produces a proportional jump in revenue and profit, or whether it becomes a permanent tax on the business. The only way to find out is to watch the numbers over the next several quarters.

But the physics don’t negotiate. Power is the wall, and efficiency is the ladder.

Everyone in AI is chasing the next breakthrough model. But models are only as useful as your ability to run them, cheaply, at scale, without melting the power grid. Google spent a decade building chips that do exactly that. Whether the market recognizes it now or in two years, the constraint doesn’t change.

The cheapest inference wins.