Bloom Energy and the 800-Volt Question

A fuel cell built for Mars happens to output the exact voltage Nvidia's next-gen AI racks demand. The alignment is real. The execution history is not reassuring.

KR Sridhar built his first fuel cell for Mars. The idea was to convert Martian CO2 into oxygen and methane, keeping astronauts alive on a planet with no grid, no infrastructure, no fallback. That was 2001. Sridhar had been running the Space Technologies Laboratory at the University of Arizona, and NASA wanted a system that could sustain life where nothing else could. Twenty-four years later, the technology he adapted for Earth is powering something nobody at NASA imagined: the server racks training the next generation of AI models.

The connection between Mars fuel cells and machine learning isn’t obvious. But when I traced the technical specs, the link turned out to be weirdly specific. Nvidia’s next-generation AI architecture runs on 800-volt direct current. Bloom’s solid oxide fuel cells output 800-volt direct current. The alignment between those two specs has nothing to do with branding. It’s a voltage match, pure and simple. And it happens to sit at the center of what might be the most important infrastructure bottleneck in the global economy right now. A small fuel cell company from San Jose, built on NASA research, may have stumbled into exactly the right position at exactly the right time.

Whether it matters depends on a question the filings can’t answer yet.

The Power Problem Nobody Solved

Here’s the basic math of the AI buildout: the companies racing to train frontier models have functionally unlimited capital. What they don’t have is watts.

U.S. data center power demand could reach 106 GW by 2035, according to BloombergNEF. To put that in context, 106 gigawatts is roughly equivalent to the total electricity consumption of Japan. That’s just American data centers. That’s just the next decade. And these projections keep getting revised upward, not down, because every generation of AI hardware consumes more power than the last.

The supply response has been overwhelmingly gas-fired. The U.S. now leads the world in new gas power development, with its pipeline rising 31% to 1,047 GW. Globally, 249 GW of new gas capacity entered development in 2025 alone. The scale of the buildout is staggering, driven almost entirely by data center demand. The world is betting on natural gas as the bridge fuel for the AI era, and it’s doing so at a pace that would’ve seemed absurd five years ago.

But building gas plants takes time. Getting them connected to the grid takes even longer. Grid connection delays are now measured in years, not months. Permitting, environmental review, transmission buildout, interconnection queues: the bottleneck has less to do with generation capacity in the abstract and everything to do with getting electrons from where they’re made to where they’re needed, on a timeline that matches the hyperscalers’ ambitions. Microsoft, Amazon, Meta, and Oracle are all spending tens of billions on AI infrastructure. They’ve secured the land. They’ve ordered the chips. And in many cases, they’re sitting there waiting for the power to show up. The mismatch between capital deployment speed and grid expansion speed is the defining constraint of the AI buildout.

Meanwhile, the power demands per rack are exploding. Nvidia is preparing the data center industry for 1 MW racks and 800-volt DC power architectures. Their Rubin-era Kyber racks are expected to draw 600 kW or more per rack by 2027. A single rack consuming a megawatt. Think about that. A medium-sized AI training cluster would need its own small power plant.

The hyperscalers have the money to build. They have the chips to fill the racks. What they can’t buy at any price is a faster grid connection. That’s the gap Bloom claims to fill, and the claim rests on physics more than marketing.

The 800-Volt Coincidence

Let me slow down on the technical piece, because this is where the story either holds or falls apart.

Nvidia’s next-generation AI factories are being designed around 800V high-voltage direct current architecture. Their own engineering blog explains that this architecture:

“800 VDC infrastructure... offers increased scalability, improved energy efficiency”

The shift to 800V DC is Nvidia’s answer to the power density problem. When you’re pushing 600 kW through a single rack, every percentage point of efficiency loss matters. Traditional data centers take AC power from the grid, run it through a series of transformers and rectifiers to convert it to DC for the servers, and lose energy at every step. The more conversion stages, the more waste heat, the more cooling you need, the more power you burn just to deal with the power you already burned.

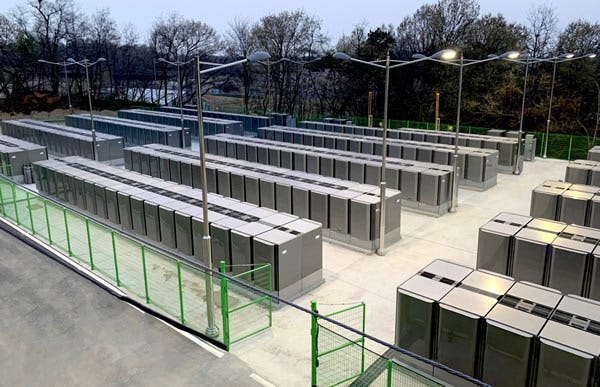

Bloom’s solid oxide fuel cells generate direct current natively. There’s no AC-to-DC conversion step. The cells electrochemically convert natural gas into electricity, and that electricity comes out as DC at a voltage that aligns directly with Nvidia’s 800V DC architecture. You take a Bloom fuel cell, you put it behind the meter at a data center, and you feed DC power directly to the racks. No grid. No transformers. No interconnection queue. No three-year wait.

The efficiency gain from eliminating conversion stages is real, if hard to pin down precisely without site-specific engineering data. But the speed advantage is the one that’s changing the economics. If you can deploy power in 90 days instead of waiting years for a grid connection, you can start training models years earlier. In an industry where six months of delay can mean losing the frontier, that kind of speed advantage stops being a convenience and starts looking like a strategic weapon.

The origin of this technology at NASA adds a layer of texture that’s hard to ignore. Sridhar designed fuel cells for a planet with no grid infrastructure at all. The entire point was autonomous power generation in a place where you couldn’t rely on anything external. The cells had to be efficient, self-contained, and capable of running on whatever fuel source was available. Twenty-four years later, that design philosophy turns out to be exactly what’s needed when Earth’s grid can’t keep up with demand. The same engineering constraints that shaped a Mars survival tool produced a technology that slots neatly into the architecture Nvidia is building for the AI era.

But a voltage match only matters if someone’s writing checks. So I went looking at the order book.

Who’s Actually Buying

When I went through Bloom’s filings and press releases, the adoption story is stronger than I expected in some places and weaker in others.

The confirmed anchor is Oracle. In July 2025, the two companies announced a partnership with language that tells you exactly what Oracle values. Their joint press release stated: “Bloom and Oracle collaborate to deliver onsite power to Oracle AI data centers within 90 days.” Speed. The entire value proposition, distilled to a single word. Not efficiency, not emissions, not cost per kilowatt-hour. Just: how fast can you get me watts?

Oracle didn’t just sign a contract. They put financial skin in the game in a way that’s unusual for a power procurement deal. According to Bloom’s 8-K filing, Oracle was granted a warrant for 3.53 million shares at $113.28 per share, tied directly to the power partnership. That’s Oracle committing to buy Bloom equity at a specific, elevated price as part of the deal structure. When a hyperscaler takes an equity position in its power supplier, it’s telling you something about how seriously it takes the relationship.

The Q4 2025 earnings tell the broader story. Hyperscaler customers grew from 1 to 6 in a single reporting period. Commercial and industrial backlog rose 135%. Those are not incremental numbers. Going from one major cloud customer to six in one quarter is the kind of adoption curve that changes the trajectory of a company. But the earnings disclosure tells you the count without the names, deal sizes, or timelines for the other five. The jump from 1 to 6 is the most bullish number in the Q4 report, and also the least detailed.

The financial picture has sharpened considerably. Bloom posted record revenue of $2 billion for fiscal year 2025, up 37% year-over-year, with 2026 guidance set at $3.1 billion. The product backlog has doubled to $6 billion. The service backlog stands at $14 billion, representing years of contracted maintenance and performance revenue. Combined, that’s $20 billion in total backlog for a company that just crossed $2 billion in annual revenue.

In November 2024, Bloom signed a gigawatt-scale procurement agreement with AEP to power AI data centers, at a time when total deployed capacity across Bloom’s entire history exceeded 1.3 GW. That single deal matched the company’s cumulative lifetime output. And the Brookfield partnership, structured at $5 billion, adds another layer of institutional commitment.

But here’s where I want to be careful. There’s a narrative circulating that Google is powering data centers with Bloom fuel cells. When I traced the actual history, the picture is different. Google’s Bloom Box deployment dates to 2010, at its Mountain View headquarters campus. It was a 1.6 MW installation providing partial power to the office complex, not a data center. That was 15 years ago, before the AI era, before the power crisis, and before Bloom’s current generation of technology. I couldn’t find evidence of current Google data center deployments using Bloom. And that distinction is important, because the bull thesis depends on hyperscaler data center adoption specifically, not general corporate installations from a decade and a half ago. Similarly, claims about an OpenAI partnership remain unverified in any filing or official announcement I could locate. Maybe these relationships exist. But they’re not in the public record, and analysis that treats them as confirmed is getting ahead of the evidence.

The verified story is strong enough on its own. Oracle is confirmed. Six hyperscalers are in the filings. The backlog is documented. Which makes the gap between what’s confirmed and what’s claimed worth examining.

The Gap Between Story and Math

The bull case for Bloom goes something like this: the U.S. alone is building 100-plus gigawatts of new gas capacity for data centers. If Bloom captures even 5-10% of new natural gas power deployment, that’s 5-10 GW per year of fuel cell installations. At estimated revenue of $3.5-4 million per MW installed, plus 10% annual service contracts on the installed base, you get to $20-40 in earnings per share by 2030. At a growth multiple, that’s $500-800 per share.

I’ve seen variations of this math circulating, and the logic is internally consistent. It’s also built on assumptions that deserve scrutiny.

Start with the base. The Global Energy Monitor data shows the U.S. gas pipeline at 1,047 GW, with 249 GW of new capacity entering development globally in 2025. These are big numbers. But the specific claim that 100 GW per year of new natural gas generation is being built annually in the U.S. for data centers is a figure I couldn’t pin to a single authoritative source. The actual projections vary significantly depending on who’s making them and what assumptions they’re using about AI growth, efficiency gains, and the split between new-build gas, renewables, and nuclear. The direction is clear. The precise number powering the bull case models is softer than it looks.

Now look at where Bloom actually is. The company is scaling toward 2 GW of annual production capacity by the end of 2026. That’s against a lifetime total of 1.3 GW deployed over two decades. Reaching 2 GW annual output would represent a genuine step-change, but it’s still an order of magnitude below the 5-10 GW per year the most optimistic models require by 2030. And keep in mind: production capacity is not the same as deployed and revenue-generating installations. Building the factory is one thing. Filling it with orders, manufacturing at yield, and installing units across dozens of sites simultaneously is a different operational challenge entirely.

The revenue trajectory shows the same tension. Bloom guided for $3.1 billion in 2026 revenue, which would be impressive, a 55% jump from the $2 billion they just posted. But the bull case models I’ve seen require revenue to reach $15-20 billion or more by 2030 to justify triple-digit stock prices. That’s a sevenfold increase in four years from a company that took over two decades to reach $2 billion.

Where the math gets more interesting is the service backlog. That $14 billion in contracted service revenue, paired with a 100% service attach rate on deployments, suggests a genuinely durable business model underneath the headline growth. Every unit Bloom installs generates a multi-year service contract. That’s recurring, high-margin revenue that compounds as the installed base grows. It’s the part of the business model that looks least like a cleantech story and most like a software-style annuity stream.

But the service stream is downstream of product installations. If product growth disappoints, the service compounding slows with it. The service backlog is real and valuable. It just can’t grow independently of the product business that feeds it.

So which version of Bloom is more likely? The answer probably depends on something most investors aren’t watching closely enough.

The Execution Question

Bloom Energy went public in 2018. The years since have included a pattern that long-term shareholders know too well: earnings misses, revised guidance, and the slow grind of scaling a hardware company in an industry that doesn’t reward patience. The stock has risen over 400% on AI enthusiasm, with bubble concerns voiced in mainstream financial media. The market is pricing in a version of Bloom that has never existed. Whether the version that does exist right now is different enough from the one that disappointed, I honestly don’t know.

But there are reasons to think it might be. The AEP gigawatt deal in November 2024 was a scale milestone. Oracle’s warrant structure ties a major hyperscaler’s financial interests directly to Bloom’s success, which is a different kind of commitment than a purchase order. The jump from one to six hyperscaler customers in a single quarter suggests something structural is shifting in how data center operators think about onsite power.

KR Sridhar has framed the moment with a line that keeps echoing in my head: “AI dominance now depends on energy dominance.” Bold, sure. But increasingly hard to argue with when you watch Microsoft, Meta, Oracle, and Amazon scrambling to lock up power capacity of any kind. The hyperscalers aren’t choosing between power sources based on ideology. They’re choosing based on what can be online in months instead of years. And Bloom’s 90-day deployment pitch hits that need more directly than almost anything else on the market.

But I keep coming back to the manufacturing gap. Going from 1.3 GW cumulative over a lifetime to 2 GW annual production by the end of 2026 is a massive operational leap. Manufacturing fuel cells at gigawatt scale is not the same as manufacturing them at hundreds of megawatts. Supply chains, quality control, installation logistics, workforce training: these are the mundane, unglamorous factors that determine whether a company with the right product at the right time actually captures the moment or watches it pass.

Bloom’s history says: be skeptical. The current demand environment says: be open to the possibility that this time, the constraint wasn’t the technology or the market. It was the absence of a use case urgent enough to justify the cost and the premium over grid power. AI infrastructure may be that use case. When a hyperscaler is losing millions of dollars per day in delayed training runs because the grid connection hasn’t come through, the cost-per-kilowatt-hour comparison changes entirely. You’re no longer comparing Bloom to cheap grid power. You’re comparing Bloom to no power at all for the next three years.

And that reframe explains why the economics may work now in a way they didn’t before. Bloom has always been more expensive than grid power on a pure unit-cost basis. But when the alternative is not building, the premium becomes a rounding error against the revenue at stake.

I genuinely don’t know which story wins. And I think anyone who tells you they do with certainty is selling something.

What It Means

Bloom Energy sits at a genuine technical intersection that didn’t exist three years ago. The 800V DC alignment with Nvidia’s architecture, the Oracle commitment, the hyperscaler customer expansion from 1 to 6, the $6 billion product backlog and $14 billion service backlog, the record revenue and accelerating guidance: all of it checks out in the filings.

What hasn’t been demonstrated yet is the manufacturing scale, the sustained execution, or the market share capture that the most aggressive models require. The story is somewhere between the bears who see another cleantech disappointment and the bulls who see $500-plus per share. The filings suggest the bears are underestimating the demand shift. The history suggests the bulls are underestimating the execution risk. Both are probably right about what they’re seeing.

Who benefits here? Companies that can deliver power behind the meter, at speed, in DC format. Bloom is the most visible fuel cell player in this space, but not the only one. Natural gas infrastructure broadly benefits from the AI power buildout. Hyperscalers who secure reliable onsite power early get to build faster than those waiting on grid connections, which in a frontier AI race is the whole game.

Who gets pressured? Traditional utilities facing grid connection backlogs that stretch for years. Renewable-only power strategies that can’t match the density or reliability AI workloads require. And Bloom itself, which now carries the weight of expectations that have run far ahead of current revenue. The stock’s 400-plus percent run has priced in execution that hasn’t happened yet. If the next few quarters look like the old Bloom, the repricing will be severe.

What to Watch

Oracle deployment results are the first and most important proof point. Does the 90-day installation actually work at scale, across multiple sites, with the reliability a hyperscaler demands? Everything else is downstream of this.

The identity and deal sizes of the other five hyperscaler customers. The jump from 1 to 6 is the most significant number in the Q4 earnings and the least detailed. Who they are and how much they’ve committed determines whether the adoption curve is broadening or shallow.

The 2 GW annual production capacity target by end-2026. Whether Bloom hits this determines if the growth trajectory is even physically possible. Miss it, and every forward projection needs to be revised downward.

Any official Nvidia partnership or endorsement. Right now, the 800V alignment is a technical fact without a commercial relationship behind it. An official Nvidia stamp would be a major catalyst. Its absence isn’t damning, but it’s worth tracking.

Quarterly revenue trajectory toward the $3.1 billion 2026 guide. Misses here would confirm the historical pattern of overpromising. Beats would break it. After years of disappointment, the next four quarters are where Bloom either earns or loses the market’s trust. The backlog gives management more visibility than they’ve ever had, which makes a miss harder to explain and more damaging if it comes.

Sridhar built his fuel cell for a planet with no power grid. Twenty-four years later, the most valuable companies on Earth are discovering they have the same problem. They have the chips, the capital, the land, and the customers. What they don’t have is the watts. Bloom might be the answer. It also might be another cleantech company that had the right technology and the wrong decade, except this time the decade finally caught up and the factory didn’t.

That’s the part that isn’t in the filings yet.